Security Square Mall has some nice neon, palm trees, and brick floors. Its occupancy isn’t too bad as of a year ago, but now that Macy’s is gone, there may be problems

Security Square Mall has some nice neon, palm trees, and brick floors. Its occupancy isn’t too bad as of a year ago, but now that Macy’s is gone, there may be problems

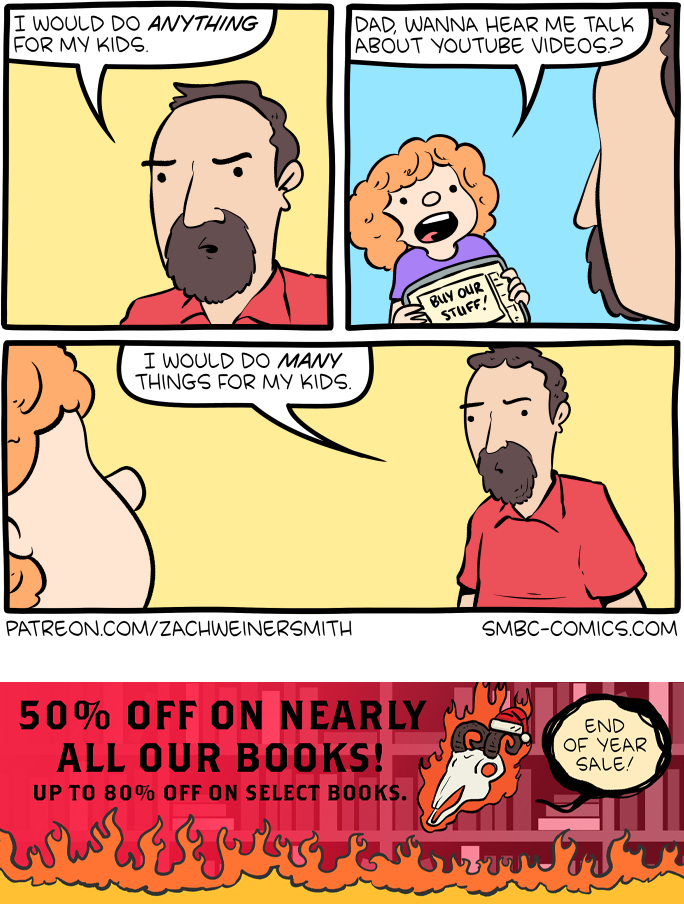

Hovertext:

Comics like these are the real reason my kids aren't old enough to read SMBC yet.

Hovertext:

Also you can add irr- to the front to negate the idea.

The Daily Beast reports:

Katie Miller, wife of Stephen Miller and former Elon Musk devotee, made an appearance on Jesse Watters Primetime on Tuesday night and joined Watters in joking about her husband’s sexual prowess immediately after discussing the murder of fellow conservative Charlie Kirk.

As their interview about Kirk was winding down—in it, they discussed his widow, Erika, and the future of Kirk’s Turning Point USA under her leadership—Watters said to Miller, “Just so the audience is aware, you are married to Stephen Miller, so you are the envy of all women.”

“The sexual matador, right?” Miller replied. “He’s an incredibly inspiring man who gets me going in the morning with his speeches, being like, ‘Let’s start the day, I’m going to defeat the left and we are going to win.‘ He wakes up the day ready to carry out the mission that President Trump was elected to do,” Miller said.

Read the full article.

Watters: You’re married to Stephen Miller. You’re the envy of all women

Miller: The sexual matador, right?…He’s an incredibly inspiring man who gets me going in the morning with his speeches being like let’s start the day, I’m going to defeat the leftpic.twitter.com/iCIE3AdMVR

— Republicans against Trump (@RpsAgainstTrump) September 24, 2025

The post Voldemort’s Wife Claims He Is A “Sexual Matador” appeared first on Joe.My.God..